Symmetric matrix

In linear algebra, a symmetric matrix is a square matrix that is equal to its transpose. Formally,

Because equal matrices have equal dimensions, only square matrices can be symmetric.

The entries of a symmetric matrix are symmetric with respect to the main diagonal. So if denotes the entry in the -th row and -th column then

for all indices and .

Every square diagonal matrix is symmetric, since all off-diagonal elements are zero. Similarly in characteristic different from 2, each diagonal element of a skew-symmetric matrix must be zero, since each is its own negative.

In linear algebra, a real symmetric matrix represents a self-adjoint operator[1] over a real inner product space. The corresponding object for a complex inner product space is a Hermitian matrix with complex-valued entries, which is equal to its conjugate transpose. Therefore, in linear algebra over the complex numbers, it is often assumed that a symmetric matrix refers to one which has real-valued entries. Symmetric matrices appear naturally in a variety of applications, and typical numerical linear algebra software makes special accommodations for them.

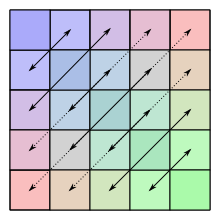

Example

The following matrix is symmetric:

Properties

Basic properties

- The sum and difference of two symmetric matrices is again symmetric

- This is not always true for the product: given symmetric matrices and , then is symmetric if and only if and commute, i.e., if .

- For integer , is symmetric if is symmetric.

- If exists, it is symmetric if and only if is symmetric.

Decomposition into Hermitian and skew-Hermitian

Let denote the space of matrices. A symmetric matrix is determined by scalars (the number of entries on or above the main diagonal). Similarly, a skew-symmetric matrix is determined by scalars (the number of entries above the main diagonal). If denotes the space of symmetric matrices and the space of skew-symmetric matrices then and , i.e.

where ⊕ denotes the direct sum. Let then

- .

Notice that and . This is true for every square matrix with entries from any field whose characteristic is different from 2.

Any matrix congruent to a symmetric matrix is again symmetric: if is a symmetric matrix then so is for any matrix . A symmetric matrix is necessarily a normal matrix.

Real symmetric matrices

Denote by the standard inner product on . The real matrix is symmetric if and only if

Since this definition is independent of the choice of basis, symmetry is a property that depends only on the linear operator A and a choice of inner product. This characterization of symmetry is useful, for example, in differential geometry, for each tangent space to a manifold may be endowed with an inner product, giving rise to what is called a Riemannian manifold. Another area where this formulation is used is in Hilbert spaces.

The finite-dimensional spectral theorem says that any symmetric matrix whose entries are real can be diagonalized by an orthogonal matrix. More explicitly: For every symmetric real matrix there exists a real orthogonal matrix such that is a diagonal matrix. Every symmetric matrix is thus, up to choice of an orthonormal basis, a diagonal matrix.

If and are real symmetric matrices that commute, then they can be simultaneously diagonalized: there exists a basis of such that every element of the basis is an eigenvector for both and .

Every real symmetric matrix is Hermitian, and therefore all its eigenvalues are real. (In fact, the eigenvalues are the entries in the diagonal matrix (above), and therefore is uniquely determined by up to the order of its entries.) Essentially, the property of being symmetric for real matrices corresponds to the property of being Hermitian for complex matrices.

Complex symmetric matrices

A complex symmetric matrix can be 'diagonalized' using a unitary matrix: thus if is a complex symmetric matrix, there is a unitary matrix such that is a real diagonal matrix. This result is referred to as the Autonne–Takagi factorization. It was originally proved by Léon Autonne (1915) and Teiji Takagi (1925) and rediscovered with different proofs by several other mathematicians.[2][3] In fact, the matrix is Hermitian and non-negative, so there is a unitary matrix such that is diagonal with non-negative real entries. Thus is complex symmetric with real. Writing with and real symmetric matrices, . Thus . Since and commute, there is a real orthogonal matrix such that both and are diagonal. Setting (a unitary matrix), the matrix is complex diagonal. Pre-multiplying by a suitable diagonal unitary matrix (which preserves unitarity of ), the diagonal entries of can be made to be real and non-negative as desired. Since their squares are the eigenvalues of , they coincide with the singular values of . (Note, about the eigen-decomposition of a complex symmetric matrix , the Jordan normal form of may not be diagonal, therefore may not be diagonalized by any similarity transformation.)

Decomposition

Using the Jordan normal form, one can prove that every square real matrix can be written as a product of two real symmetric matrices, and every square complex matrix can be written as a product of two complex symmetric matrices.[4]

Every real non-singular matrix can be uniquely factored as the product of an orthogonal matrix and a symmetric positive definite matrix, which is called a polar decomposition. Singular matrices can also be factored, but not uniquely.

Cholesky decomposition states that every real positive-definite symmetric matrix is a product of a lower-triangular matrix and its transpose, . If the matrix is symmetric indefinite, it may be still decomposed as where is a permutation matrix (arising from the need to pivot), a lower unit triangular matrix, and [relevant?] is a direct sum of symmetric and blocks, which is called Bunch-Kaufman decomposition [5]

A complex symmetric matrix may not be diagonalizable by similarity; every real symmetric matrix is diagonalizable by a real orthogonal similarity.

Every complex symmetric matrix can be diagonalized by unitary congruence

where is a unitary matrix. If A is real, the matrix is a real orthogonal matrix, (the columns of which are eigenvectors of ), and is real and diagonal (having the eigenvalues of on the diagonal). To see orthogonality, suppose and are eigenvectors corresponding to distinct eigenvalues , . Then

Since and are distinct, we have .

Hessian

Symmetric matrices of real functions appear as the Hessians of twice continuously differentiable functions of real variables.

Every quadratic form on can be uniquely written in the form with a symmetric matrix . Because of the above spectral theorem, one can then say that every quadratic form, up to the choice of an orthonormal basis of , "looks like"

with real numbers . This considerably simplifies the study of quadratic forms, as well as the study of the level sets which are generalizations of conic sections.

This is important partly because the second-order behavior of every smooth multi-variable function is described by the quadratic form belonging to the function's Hessian; this is a consequence of Taylor's theorem.

Symmetrizable matrix

An matrix is said to be symmetrizable if there exists an invertible diagonal matrix and symmetric matrix such that .

The transpose of a symmetrizable matrix is symmetrizable, since and is symmetric. A matrix is symmetrizable if and only if the following conditions are met:

- implies for all

- for any finite sequence

See also

Other types of symmetry or pattern in square matrices have special names; see for example:

See also symmetry in mathematics.

Notes

- ^ Jesús Rojo García (1986). Álgebra lineal (in Spanish) (2nd. ed.). Editorial AC. ISBN 84 7288 120 2.

- ^ Horn & Johnson 2013, p. 278

- ^ See:

- Autonne, L. (1915), "Sur les matrices hypohermitiennes et sur les matrices unitaires", Ann. Univ. Lyon, 38: 1–77

- Takagi, T. (1925), "On an algebraic problem related to an analytic theorem of Carathéodory and Fejér and on an allied theorem of Landau", Japan. J. Math., 1: 83–93

- Siegel, Carl Ludwig (1943), "Symplectic Geometry", American Journal of Mathematics, 65: 1–86, doi:10.2307/2371774, JSTOR 2371774, Lemma 1, page 12

- Hua, L.-K. (1944), "On the theory of automorphic functions of a matrix variable I–geometric basis", Amer. J. Math., 66: 470–488, doi:10.2307/2371910

- Schur, I. (1945), "Ein Satz über quadratische formen mit komplexen koeffizienten", Amer. J. Math., 67: 472–480, doi:10.2307/2371974

- Benedetti, R.; Cragnolini, P. (1984), "On simultaneous diagonalization of one Hermitian and one symmetric form", Linear Algebra Appl., 57: 215–226, doi:10.1016/0024-3795(84)90189-7

- ^ Bosch, A. J. (1986). "The factorization of a square matrix into two symmetric matrices". American Mathematical Monthly. 93 (6): 462–464. doi:10.2307/2323471. JSTOR 2323471.

- ^ G.H. Golub, C.F. van Loan. (1996). Matrix Computations. The Johns Hopkins University Press, Baltimore, London.

References

- Horn, Roger A.; Johnson, Charles R. (2013), Matrix analysis (2nd ed.), Cambridge University Press, ISBN 978-0-521-54823-6